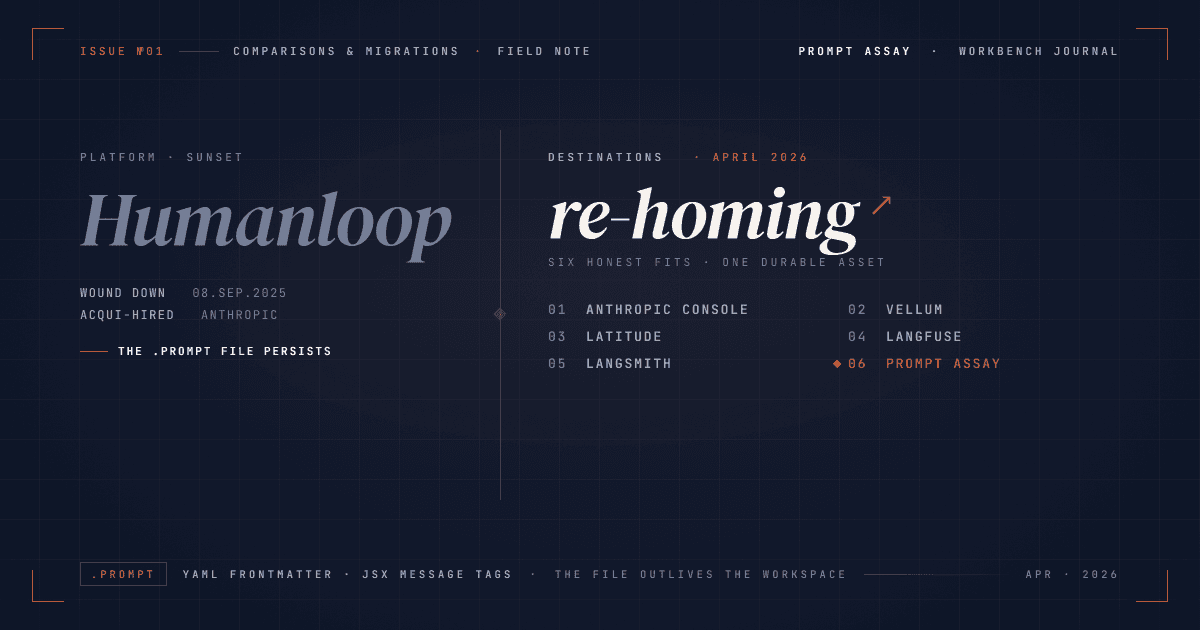

Migrate from Humanloop: a 2026 re-home guide

Humanloop shut down on September 8, 2025 after Anthropic acqui-hired its team. All Humanloop data was deleted on that date. If you picked a Humanloop alternative in a rush and the choice isn't sticking, this guide covers the durable asset (.prompt files), the destination landscape, and the BYOK cost math for re-homing without a deadline this time.

On this page

What happened to Humanloop (the seven-minute version)

Humanloop was founded in 2020 as a UCL spinout and raised about $8M in seed funding before the team joined Anthropic. On August 13, 2025, TechCrunch reported that Anthropic had acqui-hired (bought the team, not the company's assets or IP) the three co-founders (Raza Habib, Peter Hayes, Jordan Burgess) and roughly a dozen engineers. The legal entity kept running, wound down paid service, and the platform went dark.

The operational timeline is short and cold:

- July 30, 2025: Humanloop billing stopped. No further customer charges (Humanloop changelog, Aug 2025).

- August 13, 2025: Anthropic acqui-hire announced.

- September 8, 2025: Platform, API, and UI permanently offline. All user data deleted.

The team is now at Anthropic. The Anthropic Console has a Workbench for prompt authoring, an Evaluate tool with test cases, prompt versioning, side-by-side compare, and 5-point grading, and a shared prompt library with revision history. Worth naming explicitly: those Console features predate the Humanloop acqui-hire. The honest framing isn't "Humanloop's IP lives on as the Workbench tab." It's "the team now builds inside Anthropic, and the Console covers a similar product surface for Claude-only teams." Good-faith sentences, nothing more.

That's the whole timeline. The rest of this guide is about what you do with it in 2026.

Why the first migration didn't stick (if it didn't)

The common pattern we keep hearing about has three shapes.

Pattern A: the feature-set match that wasn't a fit match. Tool-owner migration guides pitched a feature list. Features overlapped, but the workflow didn't. After a week, the team stopped opening the tool and prompts drifted back into .prompt files in git.

Pattern B: the pricing model that looked cheap in August. A platform-fee tier that fit August's volume looks less generous at current volume. Trace overages, per-transaction fees, per-seat bills at team growth. The monthly charge climbed, nobody flagged it, the finance partner flagged it six months in.

Pattern C: the framework-first tool when you wanted a workbench. A few teams picked tools that assume you're already using a specific agent framework. The ergonomics are built for LangChain, Mastra, or a vendor-specific SDK. If your production prompts don't live in that framework, the tool feels like an adapter.

None of these are failures of the replacement platform in isolation. They're mismatches between a rushed choice and a real workflow. The useful move in 2026 is to treat the first migration as reconnaissance and pick again with the specific mismatch named.

The 2026 destination landscape (honest)

Treat the .prompt file as the durable asset. The workbench is replaceable; the file isn't. That reframe turns "which platform do I marry?" into "which platform imports my file and fits my workflow for the next six months?"

There is no single right answer. Seven platforms cover most of the honest fits, including the two Humanloop's own migration guide names: Langfuse and Braintrust. What follows is a flat comparison; the columns are chosen so you can spot your own constraints quickly. Pricing was verified live on April 25, 2026 against each vendor's public pricing page.

| Platform | Platform-fee model (verified Apr 25 2026) | Provider scope | Deployment | .prompt import | Honest fit |

|---|---|---|---|---|---|

| Anthropic Console | Free (Claude API usage billed separately) | Claude only | Hosted | Not supported directly | Claude-only teams who want the simplest path |

| Vellum | Pro $500/mo, Enterprise custom | Multi-provider | Hosted | Not documented; manual | Compliance-sensitive teams willing to pay for managed service |

| Latitude | Team $299/mo, Scale $899/mo, or self-host free | Multi-provider (OpenAI, Anthropic, Google, Bedrock, Azure, Cohere, Mistral, Ollama) | Hosted or self-hosted | Not documented; manual | Teams who want open-source self-hosting with a product surface |

| Langfuse | Hobby free, Core $29/mo, Pro $199/mo, Enterprise $2,499/mo, or self-host free (MIT) | Any provider via OpenTelemetry hooks | Hosted or self-hosted | Not supported directly | Observability-first teams already running ClickHouse, or self-hosters |

| Braintrust | Starter free, Pro $249/mo, Enterprise custom | Multi-provider via AI Gateway endpoint | Hosted (proxy in the request path) | Not supported; TypeScript-defined prompts, manual transformation | Well-funded eval-driven teams who accept a managed gateway between their app and the model |

| LangSmith | Plus $39/seat + $2.50 per 1K trace overage | Multi-provider | Hosted | Not supported directly | Teams already on LangChain or LangGraph |

| Prompt Assay | Solo $49/mo, Team $99/seat/mo, Free tier | Multi-provider (Anthropic, OpenAI, Google) | Hosted | Native (shipped April 2026) | Multi-provider teams who want BYOK economics in a workbench |

Three clarifications that come up every time:

LangSmith and BYOK. LangSmith's platform itself uses its own API keys for auth; that's different from who pays for inference. You still connect your own Anthropic, OpenAI, or Google key for the actual LLM calls. LangSmith's create-account docs are explicit about the distinction. Don't conflate the two. Teams hitting the $2.50-to-$5.00 per-trace ceiling at scale should also read the LangSmith alternatives breakdown for the auto-upgrade mechanics.

Langfuse and ClickHouse. Langfuse was acquired by ClickHouse on January 16, 2026. Langfuse Cloud continues as-is; the MIT license and self-hosting posture are unchanged. The Langfuse joining-ClickHouse post covers the full shape of the deal. Teams already running ClickHouse have one less foreign dependency to stand up for self-hosting. For when Langfuse isn't the right destination, see our Langfuse honest comparison.

Braintrust and the gateway. Braintrust supports BYOK in the sense that you paste your own provider keys into the workspace, but the inference traffic itself routes through their AI Gateway endpoint, not directly from your app to Anthropic, OpenAI, or Google. That's a structural difference from Prompt Assay's BYOK posture, where keys decrypt only inside the LLM call you triggered and the request goes straight to your provider. If a managed gateway is part of what you want (request unification, per-call observability, retry logic), Braintrust's choice is deliberate and earns the $249/mo. If "no third party in the request path" is part of the migration motivation, that's the trade-off to weigh.

The Anthropic Console deserves a direct call: it's free with your API usage, the Humanloop team contributes inside Anthropic, and the Workbench plus Evaluate features cover authoring, versioning, and side-by-side comparison for Claude. For a Claude-only team, that is the most honest pick. The BYOK overview in our docs explains where Prompt Assay fits next to it. Short version: we do multi-provider; Console doesn't.

BYOK economics for a re-homed team (the actual math)

Bring-your-own-key (BYOK) means your provider API key connects directly from the workbench to Anthropic, OpenAI, or Google. The platform sees metadata about what you're doing, but your inference bill goes straight from your provider to you. Think of it like running an application on colocated hardware: you still pay the provider, but nothing sits between you and them charging rent. The opposite shape is a proxy-based platform, where the platform makes LLM calls on your behalf and bills you for each one, usually with a markup on top of the raw provider cost. For a deeper walk through what BYOK means for prompt tools and why it matters for cost, the companion post covers the platform-fee-vs-markup math in full.

Most of the tools in the landscape above do BYOK for inference correctly. The real cost surface is the platform fee plus any metered charges on top of it. That's where the spread lives.

One vocabulary note before the numbers. LangSmith and Langfuse meter in traces (each recorded LLM call, eval run, or agent step counts as one); Prompt Assay and Vellum charge a flat platform fee regardless of trace count. Inference in every row is paid to your provider directly and isn't shown below. For scale: 100K traces/mo is a lightly-used feature, 1M/mo is a production feature serving a few thousand daily users, and 10M/mo is core-product scale across a larger user base.

Monthly platform cost at three workloads (April 2026 list prices):

| Trace volume | LangSmith Plus (1 seat) | Langfuse | Vellum | Prompt Assay |

|---|---|---|---|---|

| 100K traces/mo | $39 + ~$225 overage ≈ $264 | Pro $199 (100K included) | Pro $500 | Solo $49 |

| 1M traces/mo | $39 + ~$2,475 overage ≈ $2,514 | Enterprise ~$2,499 | Enterprise custom | Solo $49 |

| 10M traces/mo | $39 + ~$24,975 overage ≈ $25,014 | Enterprise ~$2,499 | Enterprise custom | Solo $49 |

A few honest asterisks:

- LangSmith's trace-based billing (you're charged for each run recorded, so every eval cell and every production call is a billable line item) is the mechanism you're budgeting around. Light logging patterns can cut traces substantially. Teams who run evals on every request instead of sampling will hit the overage band fast.

- Langfuse self-hosted is MIT-licensed and free; your real cost becomes the ClickHouse infrastructure, which is non-trivial at medium scale but linear with your control.

- Vellum's tiers aren't on its live pricing page; ZenML's 2025 breakdown captured the numbers. Expect tier-specific quotas on top of the monthly fee.

- Prompt Assay stays flat on platform fee across scale. The pricing page has the full breakdown, including the Team tier at $99/seat/mo and the Free tier with no credit card.

The principle is one line: on Prompt Assay, your keys connect directly and the platform fee stays flat per seat regardless of trace count. Setup is a five-minute key paste in Settings; the savings compound every month after. The math is the argument. Run it on your actual monthly trace volume before you pick.

Re-home your .prompt files to Prompt Assay

The .prompt format is the durable asset you exported from Humanloop before September 8. It's YAML frontmatter for model configuration plus JSX-inspired tags for the message structure. Humanloop's serialized-files reference documents the full schema. A typical file looks like this:

---

model: claude-3-5-sonnet-20241022

temperature: 0.2

max_tokens: 1024

provider: anthropic

---

<system>

You are a careful reviewer.

Respond in valid JSON with fields `score` (1-5) and `notes`.

</system>

<user>

Review the following code for correctness:

{{code}}

</user>Prompt Assay ships a native .prompt importer alongside this guide. The importer is live in the prompts screen's Import dialog as of this post's publish date: you paste the file in, the importer detects the format, extracts the system and user content, preserves {{variable}} interpolation as Prompt Assay fragments, and lands an initial version with the title and target model set. Four fields come across as warnings rather than values: temperature, max_tokens, top_p, and tools / function definitions. The schema reason is simple. Prompt Assay stores the prompt body as opaque text with a per-prompt target model; sampling parameters live at run time via the Workbench Model selector and Compare's per-run overrides, not on the prompt record itself. The importer surfaces the dropped fields so you see exactly what needs manual re-capture.

The net flow is three clicks:

Paste the file

Drop the

.promptfile into Prompt Assay's import dialog. The importer detects the Humanloop format automatically.Confirm the mapping

Verify the detected prompt type (system, user, multi-turn) and target model. The importer maps Humanloop model names to Prompt Assay's model list.

Land the initial version

Save it. The first Critique run is a good sanity check before you commit the rest of the library, and it sets up a versioning workflow you control from the first save.

If that flow is where this article finds you, paste a .prompt file into the migration page to see the importer output before signup, or create a free Prompt Assay account directly and import one prompt. No credit card; the importer lives in the prompts screen and the Free tier is where most migrations begin. Drop in a single file and decide before you move the rest. Before the cutover, build a regression baseline on the imported prompt so you can prove parity instead of guessing at it. A downloadable .prompt-to-Prompt Assay checklist is on the roadmap for teams migrating multi-hundred-prompt libraries; for now the importer plus one run is the fastest test.

Choosing durably (the one-paragraph version)

The honest objection to any "migrate to us" pitch in 2026 is: what stops this platform from being the next Humanloop? Two real answers. First, the business model. Prompt Assay takes a flat platform fee; your provider bill goes to your provider. There is no inference markup to underwrite a future acqui-hire. Second, the export. Prompts, versions, and metadata all come out as a structured JSON bundle. The public API and SDK read the same data the workbench does. If we ever went dark, your prompts leave as a file, the same way your .prompt files did. The durable asset is the prompt itself, wherever it lives. Pick the workbench that makes your next six months of prompt work faster, not the one that promises to be here forever. The vocabulary inside those prompts is durable too: see our field guide to the sixty named techniques worth knowing in 2026.

Ready to re-home one prompt?

Drop a .prompt file into Prompt Assay and watch it land before you move the rest of your library. Start for free at /signup. No credit card, no demo call, no sales gate. Bring your Anthropic, OpenAI, or Google key and import one prompt in under two minutes. For pricing and tier details, see /pricing.

Frequently Asked Questions

Reader notes at the edge of the argument.

Ship your next prompt or Skill in the workbench.

Prompt Assay is the workbench for shipping production prompts and Agent Skills. Version every change. Critique, improve, evaluate across GPT, Claude, and Gemini. Bring your own keys. No demo call. No card. No sales gate.

Further Reading

- №09·May 2026

Promptfoo alternatives after OpenAI acquisition

Promptfoo joined OpenAI on March 9, 2026. MIT license preserved; the steward changed. For multi-provider eval and red-teaming, the credible 2026 alternatives.

Comparisons & Migrations·11 min read - №08·April 2026

Langfuse alternatives: the honest comparison

Langfuse alternative tools compared in 2026: five buyer scenarios where Langfuse isn't the right fit, with honest tool recommendations for each.

Comparisons & Migrations·14 min read - №07·April 2026

LangSmith alternatives without per-trace fees

LangSmith's auto-upgrade-on-feedback can turn per-trace billing superlinear. Compare Langfuse, Helicone, Phoenix, Braintrust, and where Prompt Assay fits.

Comparisons & Migrations·13 min read

Issue №01 · Published APRIL 19, 2026 · Prompt Assay