What is an Agent Skill?

An Agent Skill is a folder of instructions and supporting files that an LLM agent discovers and loads on demand to perform a specific task. The folder contains a SKILL.md file with YAML frontmatter, optional executable scripts, optional reference docs, and optional assets. The agent reads the metadata at startup, the body when the task matches, and the bundled files only when it needs them. Skills are how an agent specializes without specializing the underlying model.

On this page

Where the term came from

Anthropic published the Agent Skills engineering post on October 16, 2025, alongside the skills-2025-10-02 beta header that turned the feature on in the Claude API. The shape of a skill, a directory with a SKILL.md at the root, was generalized into a cross-vendor specification at agentskills.io. The spec keeps the same frontmatter contract so a skill authored for Claude Code can be picked up by another runtime that follows it.

The framing Anthropic settled on is the one worth memorizing: a Skill is the onboarding guide you would write for a new hire. The agent reads that guide the first time it encounters a task that looks like the guide's domain. It does not retain the guide between sessions; it rediscovers it from the filesystem each time, which is what makes Skills durable when models, runtimes, and prompts change underneath. Skills sit in the same family as the sixty named prompt engineering techniques we keep in a printable reference, but they are an artifact type rather than an instruction type.

Anatomy of a Skill

The minimum viable Skill is a directory and one file:

pdf-processing/

└── SKILL.mdThe SKILL.md carries YAML frontmatter and a Markdown body. The frontmatter holds the discovery metadata; the body holds the procedural instructions.

---

name: pdf-processing

description: Extract text and tables from PDF files, fill forms, merge documents. Use when working with PDFs.

---

# PDF Processing

## Quick start

Use pdfplumber to extract text from PDFs.

[example body content]A richer Skill adds bundled resources without changing the contract:

pdf-processing/

├── SKILL.md # required: metadata + instructions

├── FORMS.md # optional: focused reference

├── REFERENCE.md # optional: detailed API reference

├── scripts/

│ └── fill_form.py # optional: deterministic code

└── assets/

└── template.pdf # optional: static resourcesThe frontmatter contract is short. Two fields are required.

| Field | Required | Constraints |

|---|---|---|

name | yes | 1-64 chars, lowercase alphanumeric and hyphens, no leading or trailing hyphens, no consecutive hyphens, must match the parent directory name |

description | yes | 1-1024 chars, describes what the Skill does AND when to use it |

license | no | License name or filename of a bundled license |

compatibility | no | Up to 500 chars; declares runtime, packages, or network requirements |

metadata | no | Free key-value map for clients that want to store extra fields |

allowed-tools | no | Space-separated list of pre-approved tools (experimental, support varies) |

Two constraints are load-bearing for discovery: the description must describe both what the Skill does AND when the agent should reach for it, and the body should stay under roughly 5,000 tokens with detailed material moved into referenced files. Skills that violate either rule fail in predictable ways. Vague descriptions never trigger; over-stuffed bodies blow the context budget on activation.

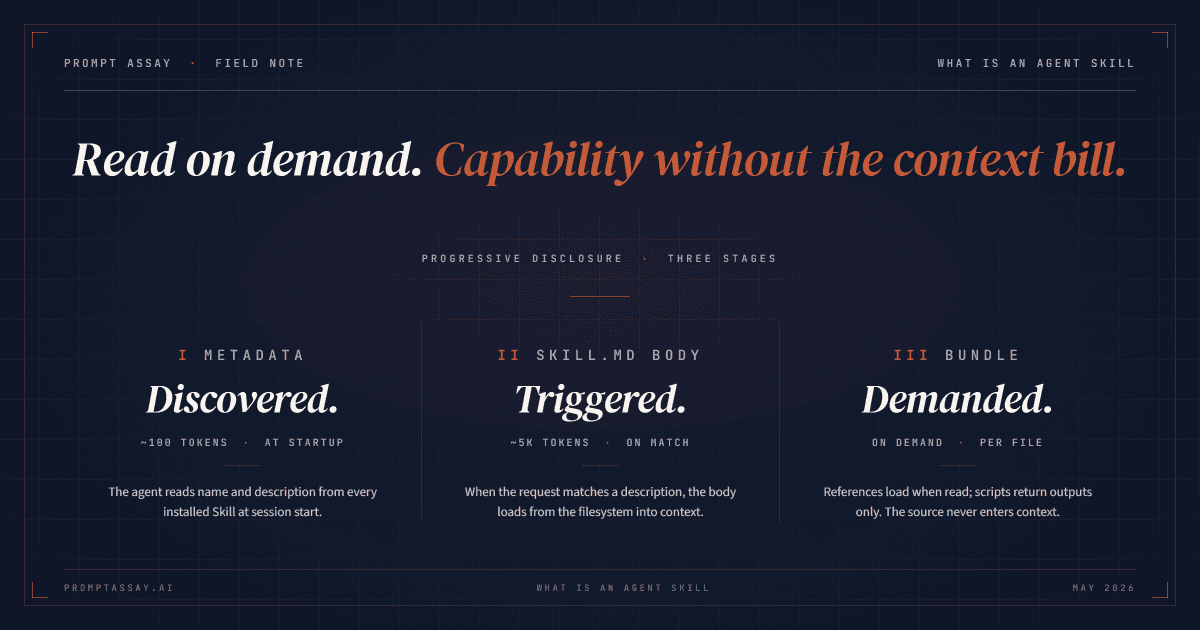

How Skills load: the three stages

The activation model is the most important thing to understand about Skills, and it is the one most teams skip on first read.

Stage 1: metadata at startup, ~100 tokens per Skill

The agent loads the

nameanddescriptionfrom every installed Skill into the system prompt at session start. That is roughly 100 tokens per Skill. A workspace can have dozens of Skills installed without measurably moving the context-window needle. The agent now knows what each Skill is and when to invoke it; nothing more.Stage 2: SKILL.md body when triggered

When the user's request matches a Skill's description, the agent reads

SKILL.mdfrom the filesystem (typically via a Bashcator equivalent) and pulls the body into context. Anthropic's recommendation is to keep this body under 5,000 tokens. Past that, you are paying for context every time the Skill activates, and the agent's attention budget on the actual task starts to suffer. Long instructions belong inreferences/.Stage 3: bundled files on demand

The body can reference other files. Those files only enter the context window when the task asks for them. A Skill can ship dozens of reference files, and the agent will read exactly the ones the current task needs. Scripts behave even better: the script's source code never enters context; only its output does. A 400-line

fill_form.pycosts zero tokens at rest and tens of tokens after it runs.

The cumulative effect is that a workspace can install many Skills with comprehensive bundled content, and the per-call context cost stays small. Each level scales differently:

| Level | When it loads | Token cost | What lives there |

|---|---|---|---|

| 1. Metadata | always (startup) | ~100 tokens per Skill | name and description from the YAML frontmatter |

| 2. Instructions | when the Skill is triggered | under 5,000 tokens recommended | the SKILL.md body |

| 3. Resources | only when the task asks for them | effectively unbounded; scripts cost only their output | references/, scripts/, assets/ |

That cumulative shape is what Anthropic calls progressive disclosure. It is the architectural reason a Skill is worth being more than a prompt.

Skills vs prompts

A prompt is a single string handed to one model call. The string carries instructions, context, sometimes examples, and the user's input. After the call returns, the prompt is gone unless your application stores it. The next call gets a fresh prompt.

A Skill is a versioned folder that lives outside any single call. The agent rediscovers it from the filesystem each session and decides at runtime whether to activate. The body of SKILL.md is closer to a prompt than the rest of the artifact, but five things make a Skill structurally different:

| Dimension | Prompt | Skill |

|---|---|---|

| Lifecycle | per-call ephemeral | persistent on disk, rediscovered each session |

| Composition | a string (sometimes with XML tags) | a directory of files with a metadata contract |

| Activation | always, by being included in the request | conditional, decided by the agent based on the description match |

| Resources | inline only; everything is in the string | scripts, references, assets loaded on demand |

| Portability | tied to the calling app's prompt template | portable across runtimes that follow the spec |

The blunt operational difference: if your "prompt" is the same paragraph copy-pasted into a dozen calls per day, that paragraph wants to be a Skill. The Skill activates when the description matches, the prompt only ever activates when you remember to paste it, and the maintenance cost over six months is not close. Versioning that paragraph the way you would version a first-class prompt artifact is the next obvious step, and the one most teams skip.

The blunt design difference: a Skill is also a negation contract. The agent reads the description and decides whether to trigger; it must also decide not to trigger when the task does not match. A good description includes both the positive case and the boundary, so the agent does not invoke a PDF Skill on a JSON parsing task. Prompts do not have this problem because they are always triggered explicitly. Skills inherit it.

If you want the prompt-engineering vocabulary that maps cleanly onto skill bodies, the field guide to sixty prompt techniques catalogs the canonical moves and where each one belongs in a workflow. The body of a Skill is still a prompt; the techniques apply unchanged.

Skills vs MCP

This is the comparison that confuses the most people, because both terms get thrown around when teams talk about extending agent capabilities. They are not competing; they solve different problems.

MCP is a transport protocol. It defines a wire format for an agent to discover and call tools, read resources, and receive prompts from a server. An MCP server is a process that exposes those capabilities; the agent is the client. The contract is the protocol itself: requests, responses, schemas. MCP says nothing about how the agent decides to use a tool, what instructions accompany the call, or what workflow chains tools together.

A Skill is a packaging convention. It defines a folder layout and a metadata contract for shipping instructions, scripts, and resources. It says nothing about transports. The Skill body might tell the agent to call a local script, hit a REST API, drive an MCP server, or do all three in sequence. The Skill is the workflow; the tool surface is whatever the workflow asks for.

The clean way to hold the two in your head:

- MCP answers how does the agent talk to this tool?

- A Skill answers what is the tool for, when does the agent reach for it, and what is the surrounding workflow?

They compose. A Skill named salesforce-reporting might pull a list of available reports from a Salesforce MCP server, format the response into a memo, and run a local Python script to render charts. The MCP server provides the connection; the Skill provides the recipe.

Anthropic's framing of the same point: Skills "complement Model Context Protocol (MCP) servers by teaching agents more complex workflows that involve external tools and software". Skills sit one level above tool invocation. They are how an agent learns the shape of work, not the wires.

Six dimensions of a Skill worth shipping

Here is the framework we use when assaying a Skill before it gets installed in a production agent. The dimensions track loosely with our six-dimension critique for prompts, but they are calibrated for the Skills surface specifically.

1. Discovery. Does the description say what the Skill does AND when the agent should use it? Vague descriptions never trigger. Good descriptions name the trigger keywords explicitly: "Use when working with PDFs, forms, or document extraction" beats "helps with PDFs." A Skill the agent never invokes is a Skill that does not exist.

2. Instruction quality. Is the body under roughly 5,000 tokens? Are the steps procedural enough that a fresh-context agent can follow them without prior knowledge? Does it tell the agent what not to do, or only what to do? Negation is load-bearing in instruction prose; agents follow paired positive-and-negative instructions more reliably than they follow either alone.

3. Examples. Does the body show at least one fully worked example with input and output? Skills without examples leak ambiguity into every activation. The agent imagines what the workflow should look like and sometimes imagines wrong. One concrete example pinned at the top of the body beats four paragraphs of abstract description.

4. Portability. Does the Skill name a runtime requirement it actually depends on, or does it leak runtime assumptions silently? A Skill that calls requests.get(...) works in Claude Code and breaks on the API surface. A Skill that mutates global state breaks under concurrent invocation. Declare the runtime in compatibility: if it matters; do not let the agent discover the constraint at runtime.

5. Efficiency. Are the heavy reference files in references/ rather than inlined in the body? Are deterministic operations in scripts/ rather than asked of the model? A 200-line database schema inlined in the body costs 200 lines per activation. Pulled out to references/SCHEMA.md, it costs zero until the task asks for it.

6. Security. Does the Skill execute code from untrusted sources? Does it fetch external content that could carry instructions? Anthropic's own guidance is direct: "only use Skills from trusted sources". A malicious Skill is a prompt-injection delivery mechanism with filesystem access. Audit scripts/ like you audit a dependency, because that is what it is. The same key-handling discipline that backs our BYOK posture applies: a Skill that needs a credential should declare that fact in compatibility:, not paper it over with a hardcoded value.

A Skill that scores well on all six is the kind of artifact that survives a model bump, a runtime change, and a hand-off to a new engineer. A Skill that scores poorly on any one of them is a Skill that will quietly stop working in production within a quarter and nobody will know why.

The load-bearing question is behavioral

The hardest thing about Skills is not authoring them. It is testing them.

A prompt's correctness can be checked in isolation: pass the string to a model, look at the output, decide if it satisfies the rubric. A Skill's correctness has three behavioral questions, and all three matter:

- Does it activate when it should? Given a representative request that falls inside the Skill's domain, does the agent decide to invoke this Skill? If the answer is sometimes, the description is too vague.

- Does it stay quiet when it should not? Given a request from a neighboring domain, does the agent leave this Skill alone? If a

pdf-processingSkill triggers on JSON parsing tasks, the description is too broad. - Does it produce the right output once activated? Given activation, does the body's instructions plus the bundled scripts and references produce a correct result? If the answer depends on undisclosed environment state, the

compatibility:field is incomplete.

These three questions are evaluations, not vibe checks. They want held-out test cases the way prompt regressions want test cases, and they should run on every Skill change the same way prompt regression tests run on every prompt change. The difference is that a Skill regression has three failure modes (false-positive activation, false-negative activation, wrong output once active) where a prompt regression has one (wrong output).

This is the work the Skills workbench handles, and the reason a Skill is treated as a versioned, evaluated artifact rather than a folder you copy-paste into ~/.claude/skills/.

What changes when you treat Skills this way

Three things shift, in roughly the order teams notice them:

The first thing that shifts is the prompt registry stops growing. Repeated guidance that used to live as paragraphs pasted into a dozen call sites collapses into one Skill the agent invokes when the description matches. The codebase gets quieter.

The second thing that shifts is review ergonomics. A Skill is a directory, so a Skill change is a directory diff. A reviewer can see the description change, the body change, and the new bundled file in one PR, the same way they review any other piece of code. Prompts scattered across application strings do not give you that view.

The third thing that shifts is the unit of expertise becomes portable. A salesforce-reporting Skill written against the open spec can be installed in any runtime that follows the spec. The team that wrote it does not own its operational surface; the runtime does. That portability is the same property BYOK gives you on the cost side: the platform is replaceable, your provider relationship and your authored artifacts are not.

That is the design payoff Anthropic is pointing at when they call Skills "the unit of agent expertise." A unit of expertise that lives outside the model, outside the prompt, and outside any one runtime is a unit you can actually iterate on across years.

How Prompt Assay handles Skills

The Skills workbench treats a Skill the way the rest of Prompt Assay treats a prompt: as a versioned artifact that gets authored in a real editor, lint-checked, critiqued on a fixed scorecard, evaluated against held-out cases, and shipped with a trust artifact your readers can verify.

Authoring is multi-file. The editor opens SKILL.md plus any scripts/ and references/ files in a single workbench, with version history and per-file diff. The linter runs nineteen rules against every save · seven frontmatter, five body, seven security. The security tier (security-skill-secret-in-body, security-skill-secret-in-script, security-skill-curl-bash, security-skill-untrusted-fetch, security-skill-undocumented-shell, security-skill-disable-model-invocation-on-destructive, and the fenced-code variant of the secret detector) carries a Shield glyph in the lint panel and routes through the AI Fix-Lint flow because secrets and footguns shouldn't have a one-click autofix.

The Skills critique scores against six skill-specific dimensions: D1 Discovery Fidelity (will the model trigger this?), D2 Instruction Quality, D3 Example Coverage, D4 Cross-Provider Portability, D5 Token Efficiency, D6 Security & Safety Posture. The scorecard pattern matches the prompt-side critique; the dimensions are different because the artifact is different.

The differentiator is the Behavioral Eval. It answers the three behavioral questions above directly. Author trigger probes (the cases the Skill should activate on) and non-trigger probes (the cases it should stay dormant on), pick the providers you ship to (Claude, GPT, Gemini), and run. The harness fans out one cell per (probe × model) pair, captures each model's response, and runs a judge call per cell to score activation and instruction adherence. Three aggregate scores fall out: Discovery Fidelity, Instruction Adherence, and Cross-Provider Consistency. A neutral grader scoring the same Skill against three providers in one pass · something a first-party tool can't ship, because each provider only knows its own model.

Save a run as a public Skill Report (noindex by default, owner-revocable, opt-in body publish), and the same artifact backs a Shields.io README badge so the score on your repo stays in sync with the latest published run. Every cell runs on your provider keys; we never proxy that traffic.

Closing

Skills are how an LLM agent learns the shape of work without learning the work itself. The folder structure is small, the metadata contract is short, and the activation model is the part most teams miss on first read. Treat a Skill the way you treat any other production artifact: write a description that triggers cleanly, keep the body lean, push detail into referenced files, evaluate the activation behavior on every change.

The Skills workbench is where Skills get authored, versioned, and evaluated against the three behavioral questions, in the same surface that already handles prompts. See how each tier prices out, or connect a provider key to a free workspace and try it. No card, no demo call, no sales gate.

Frequently Asked Questions

Reader notes at the edge of the argument.

Ship your next prompt in the workbench.

Prompt Assay is the workbench for shipping production LLM prompts. Version every change. Critique, improve, and compare across GPT, Claude, and Gemini. Bring your own keys. No demo call. No card. No sales gate.

Further Reading

- №03·April 2026

Sixty prompt engineering techniques, organized

A 2026 field guide to the 60 prompt engineering techniques worth knowing, organized into 10 workflow families with canonical examples from The Prompt Report.

Prompt Engineering·13 min read - №06·April 2026

How to version prompts: the 2026 guide

Prompt versioning captures every prompt change as an immutable artifact. Seven concrete steps, worked examples, and where it fits in your stack.

Prompt Engineering·13 min read

Issue №10 · Published MAY 10, 2026 · Prompt Assay