Prompt drift: a 2026 detection playbook

Prompt drift is when an LLM-powered feature's output quality changes over time even though the prompt itself doesn't change. Three causes drive it: silent provider model updates, input-distribution shifts, and cascading changes in dependent prompts. The cheapest controls: pin the model, run a small eval suite on every announced provider update, judge with a second model.

On this page

What prompt drift is

Prompt drift gets used loosely to mean anything that makes an LLM feature worse over time. Tightening the definition is where the discipline starts.

Drift is when the prompt is constant and the output behavior changes anyway. Regression is when you change the prompt and the output gets worse. Both are real failure modes. The fix for each is different, and conflating them is how teams end up monitoring the wrong thing.

A worked example: you ship a customer-support classifier in March on Claude Sonnet 4. The prompt is checked into git; no one edits it. In June, support tickets start getting misrouted at a higher rate. The prompt is unchanged, the input distribution is roughly the same, and the model alias still says Sonnet 4. Something underneath moved. That's drift.

If instead someone had pushed a "cleaned up the system prompt" commit on June 14th and ticket routing degraded the next day, that's regression. Pre-deploy gates catch regression because the gate runs against the version you're about to ship. Drift slips past pre-deploy gates because it hits the version you already shipped.

Regression testing has its own discipline. The rest of this article is about the other failure mode.

The three causes

Three causes account for most of the drift you'll see in production. Each has a different control.

Silent model updates

A model alias is the bare version-less name you pass to the API: claude-sonnet-4-5, gpt-4o, gemini-2.5-pro. Behind each alias sits whatever dated snapshot the provider has currently routed it to, and that routing changes over time as new snapshots ship. Sometimes the change is announced; sometimes it isn't.

The canonical recent example: OpenAI shipped a GPT-4o update in April 2025 that made the model excessively sycophantic, then rolled it back days later. The version string hadn't changed. Production users saw their feature shift, ran their own postmortem, and either caught it through evals or didn't.

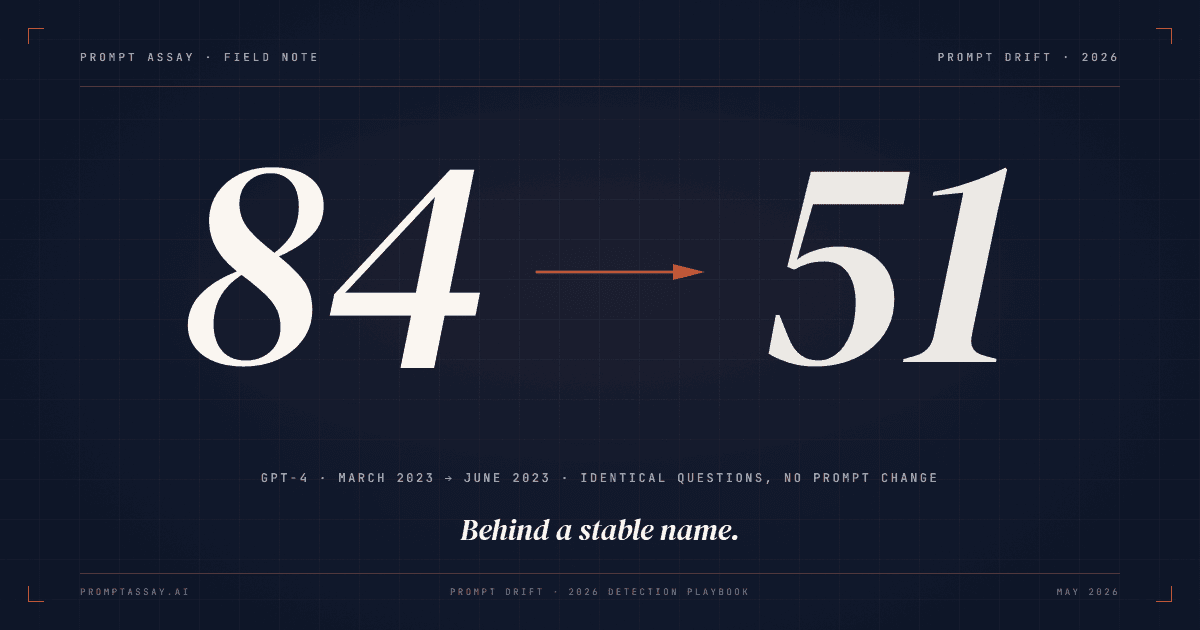

Empirical evidence that this isn't an edge case: a 2023 Stanford and UC Berkeley study tracked GPT-4's accuracy on identifying prime numbers from 84% in March to 51% in June, on identical questions, with instruction-following degraded over the same period. (How is ChatGPT's behavior changing over time?, Chen, Zaharia, Zou, arXiv 2307.09009.) Behind a stable model name, three months of API updates moved accuracy 33 points. Your feature is downstream of that.

Input-distribution shift

The prompt is constant. The model is pinned. But the population of inputs hitting the prompt changes. Your classifier was tuned on 80% English and 20% Spanish; a marketing campaign shifts the mix to 50/50; the prompt's edge cases get hit more often. Output quality drops without anything in your stack having moved.

This is the cause teams under-instrument. Logging the input distribution alongside the eval-suite score is what surfaces it.

Cascading prompt dependencies

In agentic systems, prompt A's output becomes prompt B's input. A subtle change in A (a model update, an input shift, even a temperature dial) propagates downstream and amplifies. The classifier returns a slightly broader category; the router escalates more cases; the responder writes longer replies; the user-perceived behavior shifts.

Cascade drift is the hardest to detect because no single component looks broken in isolation. The discipline is to add at least one end-to-end test case to your golden set that exercises the full chain: input goes in at prompt A, expected behavior is checked at the final output. Local pass-rates on each prompt don't catch cumulative drift.

The 2026 provider deprecation calendar

Pinning the model alias is the cheapest control against silent updates. But pinning has a cost: every pinned model eventually retires, and when it does, you migrate or you stop working.

The three major providers publish deprecation calendars on very different terms.

| Provider | Notice floor | Currently announced retirements |

|---|---|---|

| Anthropic | 60 days, public policy | Sonnet 3.7 retired 2026-02-19; Sonnet 4 and Opus 4 retire 2026-06-15 |

| OpenAI | No published floor | Wide sunset on 2026-10-23: gpt-4o-2024-05-13, gpt-4.1-nano, o4-mini, plus older gpt-4-turbo, gpt-3.5-turbo, o1, o3-mini snapshots |

| Google Vertex AI / Gemini API | ~1 month on stable models; ~2 weeks on previews | Gemini 2.0 Flash retires 2026-06-01; Gemini 2.5 family retires not-before 2026-10-16 |

Sources: Anthropic Model Deprecations, OpenAI Deprecations, Vertex AI model versions. Read May 2026.

Two things to notice. First, the notice windows are asymmetric. Anthropic publishes a floor; OpenAI doesn't; Google's stable-model window is the shortest of the three. Single-provider stacks track one calendar; multi-provider stacks track all three, each with different ergonomics. The BYOK (bring-your-own-key) posture, where your API keys connect directly to each provider, doesn't change the deprecation risk, but it does mean you see every vendor's email and dashboard, not a single aggregated view from a middleman. Tradeoff worth knowing.

Second, this isn't abstract. A Sonnet-4-only stack has roughly 33 days. A GPT-4o stack pinned to the May 2024 snapshot has roughly five months. A Gemini-2.0-Flash stack has 19 days. The pinned-version reprieve runs out, and the team that didn't instrument drift has to migrate without a baseline. A forced migration without an eval suite to anchor it can eat engineer-weeks of catch-up work, as the Humanloop sunset cohort learned in late 2025.

The pinning conversation isn't "should we pin?" The answer is yes; Google's Vertex AI docs describe latest-style aliases as auto-updating to new versions when they ship, which is exactly the silent-update problem you're trying to control. The conversation is "how do we manage the fact that the pin is a tenancy, not a permanent state?"

Detection and the right cadence

You don't need a continuous drift monitor. You need three things on a cadence.

Three signals

Eval-suite regression on re-run. You re-run your golden set (the fixed labeled corpus of inputs your suite scores against a rubric), compare to baseline. A score drop on cases that previously passed is the cleanest drift signal because the inputs and rubric are fixed; only the model's behavior can have moved.

Production complaint clustering. Tickets, thumbs-down feedback, or any user-facing signal that clusters by feature surface. Drift rarely shows as a single bad output; it shows as a slight skew across many.

Judge-score drift on a rolling sample. LLM-as-judge means handing the output and a rubric to a second model and asking it to score: think of it as assertEquals for subjective quality. Pull 50 to 100 fresh production outputs every two weeks, run the judge against the same rubric you use for evals, and chart the score over time. Trends matter; single runs are noisy.

When two signals diverge (the eval suite is green but complaints are rising), the gap usually points at input-distribution drift the golden set isn't sampling for. Treat divergence as a cue to re-sample the golden set from current production traffic, not to suppress one of the signals.

Cadence, not a monitor

Continuous monitoring is expensive in tokens (judge calls add up) and noisy in signal (small score wobbles trigger alarms that aren't actionable). For almost every team, a cadence-based discipline is sufficient and cheaper. Teams that do want continuous trace-storage-plus-telemetry on top of their inference traffic have a separate set of options in the post-acquisition eval-tool landscape; that posture and this article's posture are not mutually exclusive.

The cadence that works:

- Every prompt change: re-run the suite as a pre-deploy gate. This is regression testing; see the regression-testing guide for the mechanics.

- Every announced provider update: re-run the suite within 48 hours of the announcement. Anthropic, OpenAI, and Google all email deprecation and model-update notices; treat the email as a work item.

- Quarterly: re-run the suite even when nothing has been announced. This catches the input-distribution-shift cause that no provider announcement will tell you about.

Prompt Assay ships eval suites with LLM-as-judge on every tier, including Free, so saying "the workbench has a built-in cadence engine" would overstate it. You maintain the suite, you decide when to run it, and we score it. That's the honest framing. We don't ship a continuous drift monitor; the discipline below is what we ship instead. A cadence beats a monitor on cost and signal-to-noise for most teams.

The four-step playbook

Four steps assemble the discipline. Each builds on the last; none requires more than an afternoon.

Pin your model to an explicit version

Replace

latestaliases with dated versions in your production code (the dated form likeclaude-sonnet-4-5-YYYYMMDD, not the bare aliasclaude-sonnet-4-5; each provider publishes its own date suffix convention). Update the pin only when you've re-run the eval suite against the new version. Pinning costs nothing and protects against silent updates between your re-runs.Build a golden set from production traffic

Sample real production inputs (anonymize if needed) and label them with expected behavior. Cover happy path, edge cases, and adversarial inputs (the 60-technique field guide catalogs the prompt-injection and edge-case families if you don't have your own seeds yet). Maxim AI's golden-dataset guide derives roughly 246 cases per scenario from a 95%-confidence sampling formula for production-critical evaluation; treat that as the target as your eval discipline matures, and start smaller, adding cases as edge cases surface in production.

Versioning the golden set alongside the prompt is what lets you compare runs over months. Re-sample fresh production inputs into the set every 6 to 12 months: input distributions shift, and a stale corpus quietly masks input-distribution drift behind an "all pass" signal.

Score with LLM-as-judge on a different model family

Hand the output and the rubric to a second model and ask it to score. Run the judge on a different family from the prompt under test: judge on GPT or Gemini if the prompt runs on Claude; judge on Claude if it runs on Gemini. Self-preference bias is documented and matters.

Pair the judge with cheap keyword pre-filters for obvious-fail cases so you're not burning judge tokens on outputs that fail a structural check.

Re-run on a cadence

Every prompt change (including parameter edits like temperature, top_p, or system-prompt cleanup), every announced provider update, and quarterly even when nothing has been announced. Three triggers; calendar reminders, not a continuous monitor. On teams larger than one engineer, designate an owner for the cadence calendar; the discipline doesn't run itself.

A note on cost. Pinning costs nothing. A 100-case suite re-run quarterly costs four judge calls per case per year (one quarterly run × 100 cases × 4 quarters = 400 judge calls per year per prompt). At Claude Haiku 4.5 input and output pricing, this is typically under 1% of the inference spend the prompt itself generates in a quarter. BYOK means you see that bill in your provider account, not through a Prompt Assay markup. Your keys, your bill, no middleman. Why that matters for cost attribution.

The forced-migration alternative, the one that hits you if you didn't instrument, costs engineer-weeks. Pinning plus four judge calls per case per year is the cheapest insurance available.

Frequently Asked Questions

Reader notes at the edge of the argument.

Ship your next prompt or Skill in the workbench.

Prompt Assay is the workbench for shipping production prompts and Agent Skills. Version every change. Critique, improve, evaluate across GPT, Claude, and Gemini. Bring your own keys. No demo call. No card. No sales gate.

Further Reading

- №05·April 2026

How to set up prompt regression testing

A 7-step guide to building regression tests for production prompts. Catch breakage before deploy with golden datasets, scoring rubrics, and LLM judges.

Evaluation & Testing·16 min read - №06·April 2026

How to version prompts: the 2026 guide

Prompt versioning captures every prompt change as an immutable artifact. Seven concrete steps, worked examples, and where it fits in your stack.

Prompt Engineering·13 min read

Issue №11 · Published MAY 13, 2026 · Prompt Assay